Unlike the relational database era, recent times have revolutionized the end-to-end data pipeline from data generation, data collection, data ingestion, data storage, data processing, data analytics and the cherry on top, machine learning and data science. The first platform for handling large volumes of data with respect to distributed storage and parallel processing was Apache Hadoop (It is very popular now as well). In this short blog, I will try to paint a high-level picture of Apache Hadoop and the most popular integrations.

Let’s look at some history

Hadoop was created by Doug Cutting and Mike Cafarella in 2005 based on Google File System paper that was published in October 2003. It was originally developed to support Apache Nutch (an open-source web crawler product) and later moved to a separate sub-project. Doug, who was working at Yahoo! at the time named the project after his son’s toy elephant 🙂 Later in 2008, Hadoop was open sourced and The Apache Software Foundation (ASF) made Hadoop available to the public in November 2012

What makes Hadoop special

The core idea behind Hadoop was to process large volumes of data in a distributed way using commodity hardware. It was also an assumption that hardware failures can occur frequently and the software should be capable of handling these and providing fault tolerance. Commodity does not mean cheap, non-usable hardware. It just means that it is relatively inexpensive, widely available and basically interchangeable with other hardware of its type. Unlike purpose-built hardware designed for a specific IT function, commodity hardware can perform many different functions (same hardware can be used to run a web-server, a Linux server, an FTP server, etc.)

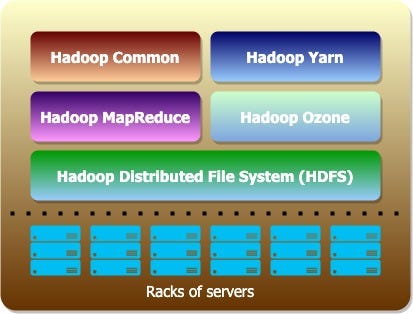

Hadoop can be configured to run on a single server (for development and testing purposes) and on full scale multi node setup (production scenarios). In any case, the core components for Hadoop are

Hadoop Distributed File System (HDFS)

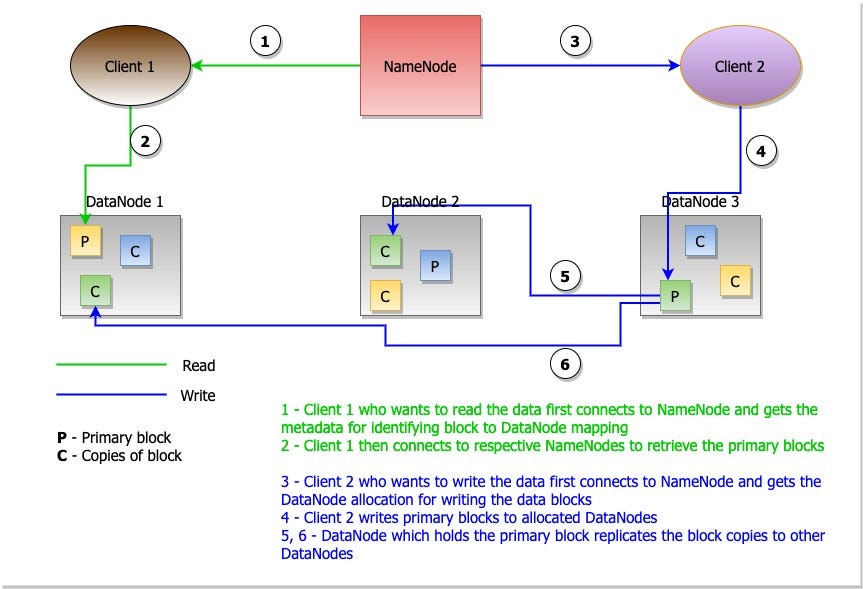

HDFS is a highly fault-tolerant distributed file system and is designed to be deployed on low-cost hardware. HDFS provides high throughput access to application data and is suitable for applications that have large data sets

HDFS has a master slave architecture with a NameNode (master) and number of DataNodes (slaves). HDFS exposes a file system namespace and allows user data to be stored in files. Internally, a file is split into one or more blocks (128MB sized) and these blocks are stored in DataNodes. NameNode manages the metadata which is the mapping of blocks to the DataNodes. For fault tolerance, a single file block will have multiple copies (default 3) and they will be stored in multiple DataNodes. Let’s look how a read and write happens in HDFS at high-level

Hadoop MapReduce

It is a framework for easily writing applications which process vast amounts of data (multi-terabyte data-sets) in parallel on large clusters (thousands of nodes) of commodity hardware in a reliable, fault-tolerant manner.

MapReduce application consists of three different tasks. A mapper task, a reducer task and an optional combiner task. The mapper task processes the input file by splitting it into independent chunks and processes them in parallel. The number of mappers depends on the number of InputSplits. InputSplit is the smallest data chunk that can be processed independently, which will be a multiplier of HDFS data block (128MB). The reducer takes the outputs of all mapper tasks, sorts the results and then generates the final output. The combiner task is known as a semi-reducer which does a small combine operation in the mapper side itself. This is done to reduce the data volume before the reducer picks it up.

Hadoop YARN (Yet Another Resource Negotiator)

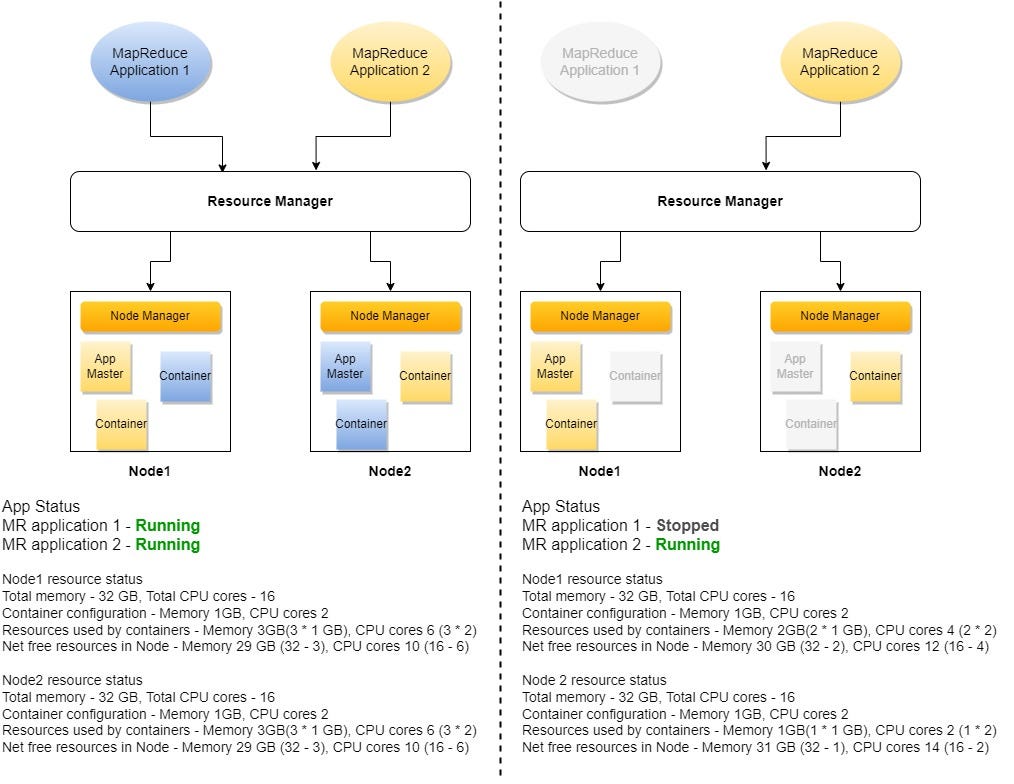

Interesting name, isn’t it? YARN takes care of the resource management in Hadoop cluster. Any application that tries to run on Hadoop cluster can get the required resources (CPU cores and memory) from YARN.

The smallest unit of resource that YARN can allocate is known as a container. Container is similar to an actual server. Both have a number of CPU cores, and a finite memory. But the server is physical and container is logical, meaning there can be many containers allocated in a single server. The memory and CPU allocated to a single container is based on YARN configuration properties.

Lastly, let’s look quickly at the core services in YARN

Resource Manager (RM): Central authority who allocates containers for all applications

Node Manager (NM): Service running on each server on the cluster and responsible for containers, monitoring their resource usage and reporting the same to Resource Manager

Application Master (AM): A per application service which negotiates containers from RM for that specific application. AM itself runs as a container

Hadoop Commons and Hadoop Ozone

Hadoop Common provides a set of services across libraries and utilities to support the other Hadoop modules.

Ozone is a scalable, redundant, and distributed object store (Such as AWS S3 or Azure Blob storage) for Hadoop which can scale to billions of objects of varying sizes.

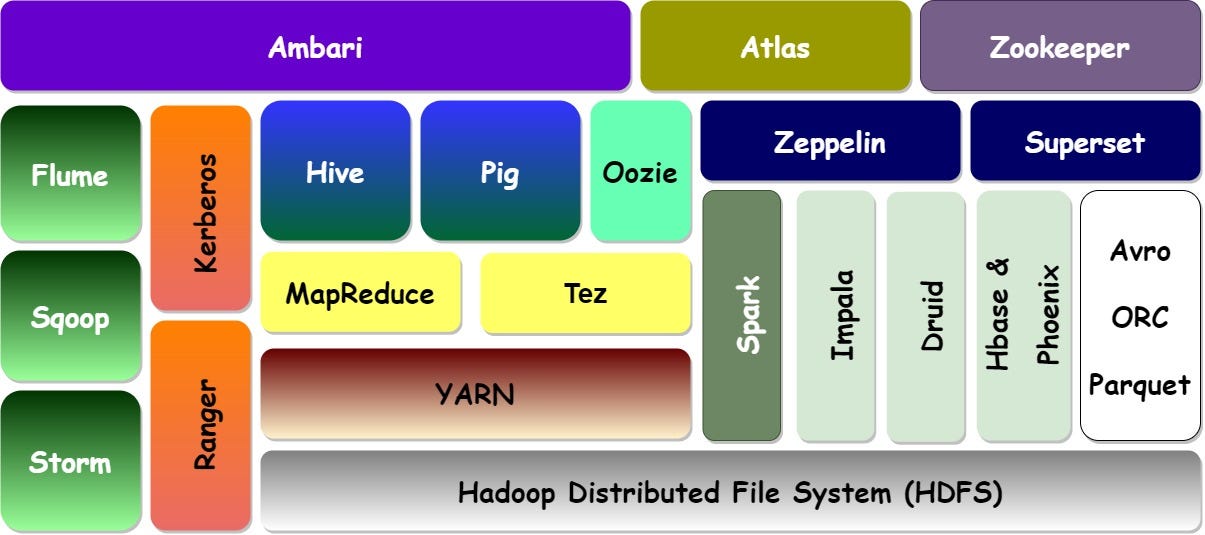

The ecosystem of rich integrations

So far, the focus has been on the core components. Let’s shift the focus a little bit to look at some of the very popular integrations runs on top of Hadoop which elevates Hadoop to an enterprise data platform

Data movement

Apache Flume: A distributed, reliable, and available service for efficiently collecting, aggregating, and moving large amounts of log data from a variety of sources to HDFS

Apache Sqoop: Data importing and exporting service for structured data to and from HDFS. Typically, the input and output sources will be RDBMS systems

Apache Storm: A distributed real-time computation system. Apache Storm makes it easy to reliably process unbounded streams of data, a.k.a real-time processing

Data processing

Hive: Hive is data warehouse software that facilitates reading, writing, and managing large datasets residing in distributed storage (such as HDFS) using SQL programming

Pig: Pig is data data processing framework which is powered by a proprietary scripting language (Pig Latin) for reading, writing and processing data stored in HDFS

Tez: Apache Tez is a framework comparable to MapReduce. It provides better performance than MR as intermediate results will not be stored on disk, rather in memory. In addition to this vectorization is also used (processing bulk of rows rather than single row at a time)

Hive and Pig make use of either MR or Tez for running data processing jobs

Security

Kerberos: In on-premise Hadoop cluster setup, Kerberos is used as the authentication mechanism within the cluster components and between external services and Hadoop

Ranger: Ranger the service for authorization across all Hadoop components. Ranger enables security policies varying from directory, file, database and table access as well as fine grained access on tables

Oozie It is a workflow scheduler running on Hadoop platform. Oozie is integrated with the rest of the Hadoop stack supporting several types of Hadoop jobs out of the box

Spark An in-memory computation framework which provides batch and real-time data processing capabilities along with machine learning and graph data processing capabilities

Data stores & Query engines

Impala: A massively parallel processing, highly performant SQL query engine with in-memory processing capabilities to provide very low latency results on large data volumes

Druid: A column oriented, distributed data store whose core design combines ideas from data warehouses, timeseries databases, and search systems to create a high-performance real-time analytics database

HBase & Phoenix: HBase is a NoSQL, distributed column-oriented data store which provides random read/write access capabilities on bigdata.

Phoenix is an SQL query engine that runs on-top of HBase which provides OLTP capabilities to perform various types of querying patterns

File formats

Avro: It is a row-oriented data serialization format which uses JSON to define the schema and creates compact binary data when serialized. It supports full schema evolution

ORC (Optimized Row Columnar): It is an optimized column-oriented data storage format mainly used in Hive. It also enables Hive to have transactional capabilities similar to OLTP database

Parquet: Similar to ORC, it is another column-oriented data storage format which is widely used with various data processing platforms such as Apache Spark

Zeppelin A web-based notebook which brings data exploration, visualization, sharing and collaboration feature to Hadoop used by data analysts, data scientists, etc. for data exploration

Superset A powerful web-based data visualization platform which provides rich visualization and dashboarding capabilities with connectivity to Hive, Druid, HBase and Impala and other data stores

Ambari Central management service for Hadoop which enables provisioning, managing, and monitoring Apache Hadoop clusters

Atlas An open-source metadata management and governance system designed to help enterprises to easily find, organize, and manage data assets in Hadoop platform

Zookeeper It is a distributed coordination service that helps to manage a large number of hosts such as Hadoop cluster

Hadoop enterprise

CDP (Cloudera Data Platform)

AWS EMR (Elastic MapReduce)

Azure HDInsight

Google Cloud Dataproc

References

Manu Mukundan, Data Architect, TE, Nest Digital